How NVIDIA Spectrum-X and MRC Are Redefining AI Networking at Scale

The Race for AI Infrastructure

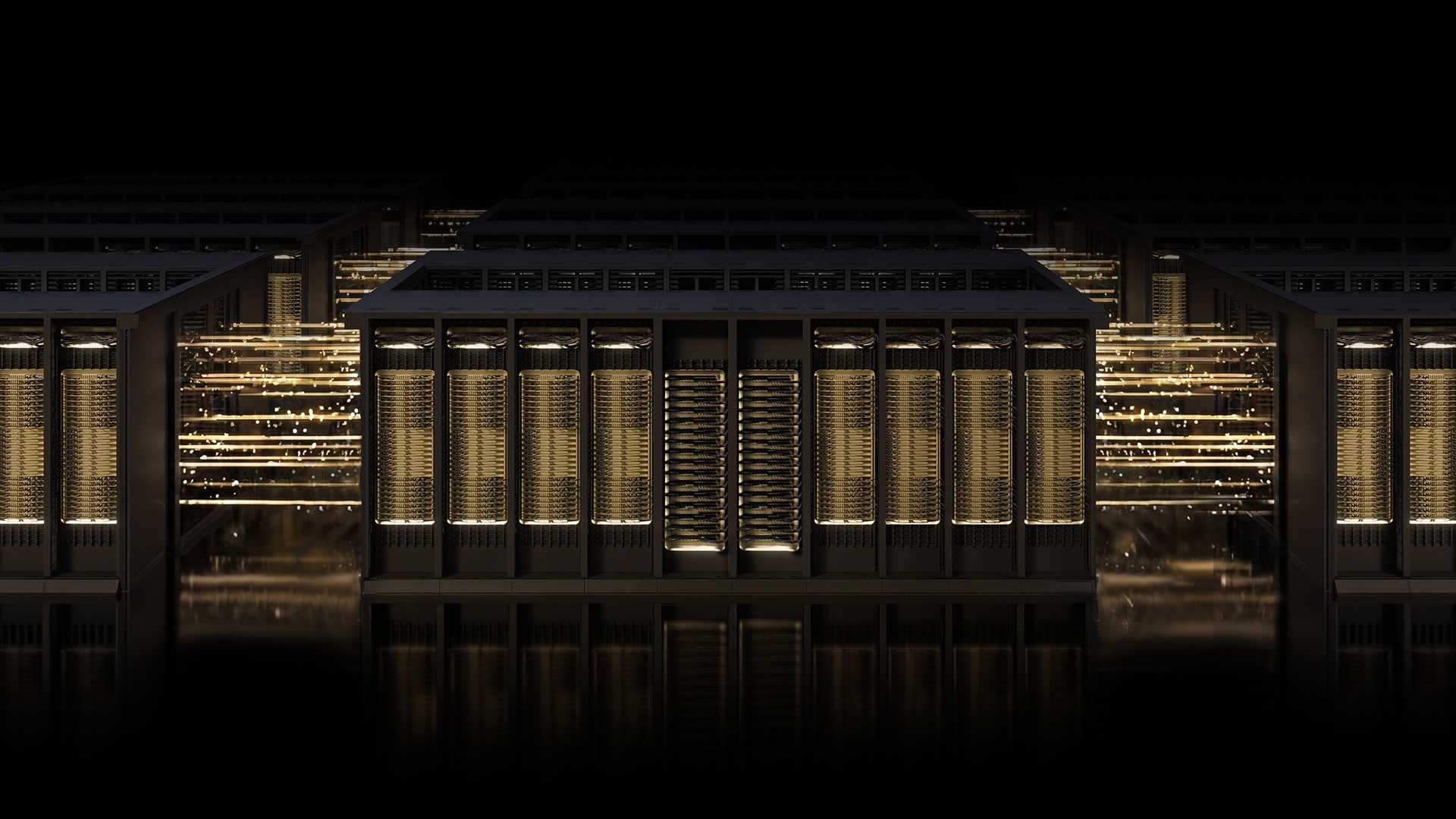

Building the world's most advanced AI factories requires networking that can match the speed and ambition of artificial intelligence itself. As organizations push toward gigascale models—those with trillions of parameters—the underlying fabric must deliver uncompromising performance, resilience, and scalability. NVIDIA's Spectrum-X Ethernet scale-out infrastructure has emerged as the leading solution, now enhanced with a breakthrough protocol called Multipath Reliable Connection (MRC).

The Challenge of Scaling AI Networks

Traditional Ethernet networks struggle under the demands of large-scale AI training. As workloads multiply, network congestion and data loss can idle expensive GPUs, slashing utilization and extending training times. The industry needed a new approach—one that treats networking as a co-designed element of the AI compute stack, not an afterthought.

Enter NVIDIA Spectrum-X, an open, AI-native Ethernet fabric purpose-built to address these exact pain points. Now, with the introduction of MRC, this platform takes a giant leap forward.

What Is MRC and Why It Matters

MRC is an RDMA transport protocol that revolutionizes how data moves across AI fabrics. Instead of relying on a single path for each RDMA connection—like a one-lane road serving an entire town—MRC enables a single connection to distribute traffic across multiple network paths simultaneously. Think of it as replacing that narrow road with an intelligent street grid, where a real-time traffic app reroutes vehicles around jams and closures automatically.

This innovation was co-developed by NVIDIA, Microsoft, and OpenAI, and has already proven its value in production. As Sachin Katti, head of industrial compute at OpenAI, noted: "Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA. MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale."

Real-World Deployments and Industry Validation

The impact of MRC extends beyond OpenAI. Two of the world's largest AI factories—Microsoft’s Fairwater and Oracle Cloud Infrastructure’s Abilene data center—rely on MRC to meet performance, scale, and efficiency targets. These facilities are purpose-built for training and deploying leading-edge frontier large language models (LLMs).

NVIDIA's collaboration with Microsoft has been longstanding, focusing on advancing infrastructure for next-generation AI. Similarly, Oracle’s Abilene data center leverages MRC to ensure consistent throughput and uptime. The result: organizations can run large-scale AI models with confidence, knowing the network will not become a bottleneck.

Importantly, MRC started as a proprietary optimization on Spectrum-X hardware but has now been released as an open specification through the Open Compute Project (OCP). This move democratizes the technology, enabling the wider ecosystem to benefit from gigascale AI networking.

Key Technical Benefits of MRC

MRC delivers three core advantages that directly translate to higher GPU utilization and faster training times.

Optimal GPU Utilization

By load-balancing traffic across all available paths, MRC ensures every GPU receives the bandwidth it needs throughout a training run. Even under heavy congestion, the protocol dynamically avoids overloaded paths in real time, maintaining sustained high bandwidth.

Resilience and Fault Tolerance

Data loss is inevitable in large-scale fabrics, but MRC's intelligent retransmission enables rapid, precise recovery. Short-lived interruptions have minimal impact on long-running jobs, helping to avoid costly GPU idle time. This resilience is critical for maintaining the efficiency of frontier training runs.

Operational Visibility

Administrators gain fine-grained visibility and control over traffic paths, simplifying operations and accelerating troubleshooting. With deep telemetry from the Spectrum-X platform, teams can monitor performance in real time and adjust routing policies as needed.

Conclusion

The combination of NVIDIA Spectrum-X and MRC sets a new standard for AI networking. By moving from a single-lane approach to an intelligent, multi-path design, the fabric can handle the immense data flows required for gigascale AI. With backing from industry leaders like OpenAI, Microsoft, and Oracle, and an open specification through OCP, this technology is poised to become the backbone of tomorrow’s AI factories.

For organizations building next-generation AI infrastructure, the message is clear: networking must evolve alongside compute. Spectrum-X with MRC delivers exactly that evolution.

Related Articles

- How NVIDIA Spectrum-X and MRC Are Redefining AI Networking at Giga-Scale

- 6 Critical Things to Know About the Latest Smartphone Price Hikes

- How to Make an Informed Decision on the New Mac Mini Price Increase

- 5 Key Insights from Improving Man Pages for tcpdump and dig

- Enhancing Man Pages for tcpdump and dig: A Q&A Guide

- The Art of Concealing Bluetooth Trackers in Postal Mail: A Technical Guide

- Apple Discontinues $599 Mac Mini, Raising Entry Price to $799 Amid Chip Shortage

- Motorola's 2026 Razr Lineup: Incremental Updates, Higher Prices – What You Need to Know