How to Unify Your Hiring Data for AI-Powered Talent Acquisition

Introduction

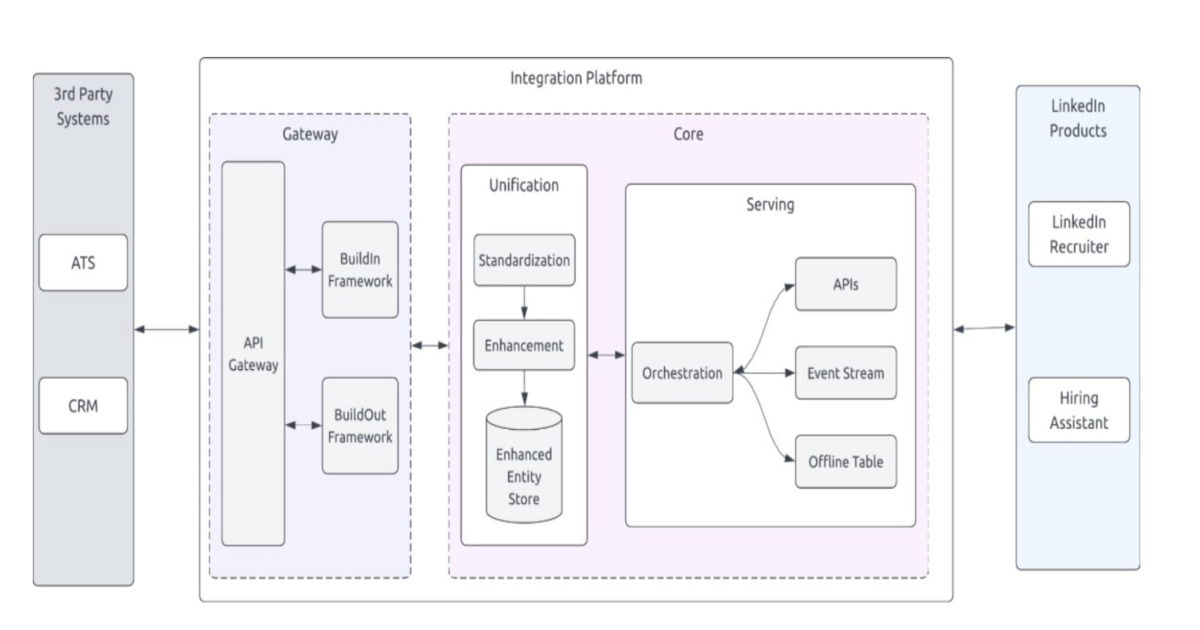

Modern talent acquisition depends on clean, consistent data flowing across multiple systems—applicant tracking systems (ATS), candidate relationship management (CRM), assessment platforms, and more. When data silos persist, hiring teams face long onboarding times, duplicate records, and unreliable analytics. LinkedIn recently demonstrated how a unified integrations platform can reduce onboarding time by 72%, boost data completeness, and unlock AI-driven hiring features. This guide walks you through the key steps to replicate that success in your organization.

What You Need

- A cross-functional team including HR IT, data engineering, recruiting operations, and a data steward.

- Access to existing hiring systems (ATS, CRM, background check providers, skill assessment tools).

- Data pipeline tools (e.g., Apache Kafka, Airflow, or a cloud-native service like AWS Glue).

- Schema definition standards (e.g., JSON Schema, Avro, or a shared data dictionary in a wiki).

- Orchestration platform for scheduling and monitoring data workflows.

- A centralized data store (data lake or warehouse) such as Snowflake, BigQuery, or Redshift.

- AI/ML tools for candidate matching, skill gap analysis, and hiring prediction.

Step-by-Step Guide

Step 1: Audit Your Current Data Landscape

Begin by mapping every system that touches candidate or hiring data. List all data sources, their formats (CSV, JSON, API), and current integration methods (batch uploads, manual entry, API calls). Document pain points: duplicate records, missing fields, or inconsistent date formats. This audit will reveal where data consistency breaks down and give you a baseline for measuring improvement.

Step 2: Define Standardized Schemas for Key Entities

Create a single canonical schema for core entities like candidate, job requisition, application, and interview feedback. Each field should have a clear name, type, allowed values, and source system. For example, standardize phone numbers to E.164 format and dates to ISO 8601. Publish this schema in a shared data dictionary and get buy‑in from all teams. This step is critical—LinkedIn’s platform succeeded because they enforced standardized schemas across all connectors.

Step 3: Build Orchestration Workflows to Reconcile Data

Set up an orchestration engine (like Apache Airflow) to run regular data pulls from each source system. Each workflow should transform raw data into the standardized schema, detect and resolve conflicts (e.g., two systems reporting different candidate emails), and merge records into a unified view. Use idempotent logic so that re‑running workflows doesn’t create duplicates. Orchestration also lets you schedule these processes during off‑peak hours and get alerts on failures.

Step 4: Centralize Data Processing in a Single Repository

Stream the reconciled data into a centralized data lake or warehouse. This serves as the “single source of truth” for all hiring teams. Ensure the repository supports versioning and lineage tracking so you can trace any data point back to its original source. Centralizing processing eliminates point‑to‑point integrations and makes it much easier to add new systems later—just build one new connector to the central store instead of connecting to every other system.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Step 5: Enable AI‑Driven Features on Top of Clean Data

With consistent, complete data in one place, you can now deploy AI models. Examples: candidate‑job matching using skills extracted from resumes (standardized against the schema), predictive attrition based on hiring pipeline velocity, or intelligent screening that shortlists applicants matching your success criteria. The unified schema ensures models receive the same fields every time, which dramatically improves accuracy. LinkedIn reports that their platform reduced onboarding time by 72% partly because AI features no longer struggled with messy input.

Step 6: Monitor, Test, and Iterate

Treat your integration platform as a living system. Set up dashboards to track data freshness, completeness scores, and error logs. Conduct regular reconciliation audits between the central store and source systems. When a new hiring tool is adopted, update the schema and add a workflow quickly. Continuous improvement will maintain the 72% onboarding reduction and prevent data drift from undermining your AI models.

Tips for Success

- Start small—choose two or three core systems first, prove the workflow, then expand.

- Involve recruiting operations early; they know which data fields matter most day‑to‑day.

- Automate data quality checks in the orchestration workflows (e.g., flag null required fields).

- Use incremental loading instead of full refreshes to reduce latency and server load.

- Document every schema decision and share it with all platform users to avoid drift.

- Consider hiring a data engineer specializing in HR tech if your team lacks internal expertise.

- Plan for security and privacy—GDPR, CCPA, and other regulations may require data masking or retention policies.

By following these steps, you can build a hiring data pipeline that rivals LinkedIn’s consolidated platform. The result: faster onboarding, higher data quality, and AI tools that actually deliver on their promise.

Related Articles

- Safari Technology Preview 237: 10 Key Fixes and Features You Should Know

- Apple Q2 2026 Earnings Breakdown: Revenue Hits $111.2B, Up 17%

- Maximize Your Tax Refund Speed: Essential Tips for the 2022 Filing Season

- Google Maps Power User Reveals 10 Game-Changing Tips After 20 Years of Experience

- Google Supercharges Gemma 4 with Multi-Token Prediction for Blazing Fast AI Inference

- Exploring Gemini is rolling out to cars with Google built-in

- Windows 11 KB5083631 Update Delivers 34 Enhancements and Fixes

- KDE Plasma 6.6.5 Update Targets NVIDIA Performance Woes; Developers Push New Features for Plasma 6.7